|   |

Abstract

Machine Learning on graphs, and more generally non-euclidean structured data, has recently emerged as a primary citizen of the machine learning world, with many applications in community detection, recommender systems, natural language processing, molecule classification, protein interface prediction, quantum chemistry, epidemiology, combinatorial optimization… and so on. In particular, deep models such as Graph Neural Networks have become very popular tools, with their successes, limitations, and a plethora of open questions.

This day aims to bring together researchers and students to discuss recent advances in Graph Machine Learning, both theoretical and practical. Topics include, but are not limited to:

- Graph Neural Networks, graph kernels

- Statistics on graphs, random graphs

- Graph Signal Processing

- …

Invited speakers

- Pierre Borgnat (CNRS, ENS Lyon)

Representation Learning for Attributed Graphs

Graphs are discrete structures apt at coding relationships between entities, coded as nodes, while signals (or attributes) on these nodes characterise the own behaviour of each constituant. In Graph Signal Processing (GSP) and Machine Learning, there is an increasing focus of designing learnable methods for representation of attributed graphs (of graph signals), where one takes into account both structure and attributes, while having correct numerical complexity. We will present two such models having a scalable complexity: i) one aiming at the multiscale representation of attributed graphs, and ii) a method aiming at learning the metrics between graphs. The common ground is to use simple Graph Neural Networks as basic brick of nonlinear representation of graphs, while GSP approaches are leveraged to compute a multiscale representation (i) or a learnable metric (ii).

- Johannes Lutzeyer (LIX)

Graph Shift Operators and Their Relevance to Graph Neural Networks

Graph Neural Networks (GNNs) have celebrated many academic and industrial successes in the past years; providing a rich ground for theoretical analysis and achieving state-of-the-art results in several learning tasks. In this talk, we will discuss several approaches to this theoretical analysis with a focus on the role of Graph Shift Operators (GSOs) in GNNs. Informally speaking, GSOs are matrices representing graph structures with the adjacency and Laplacian matrices being the most common GSO choices. We will illustrate this intersection of GNNs and GSOs by looking at an example where we optimise a parametrised GSO in GNN frameworks, and thereby optimally represent graphs leading to performance improvements and interpretable results. We will furthermore discuss theoretical work establishing the potential advantages of enriching the chosen GSO with node feature information. Finally, we will take a look at how the addition of prior cluster information to the GSO can render Graph Autoencoders more suitable for joint community detection and link prediction on various real-world graphs, notably including industrial-scale graphs provided by the Deezer music streaming service.

- Catherine Matias (CNRS, LPSM)

Spectral density of random graphs: convergence properties and application in model fitting

Random graph models are used to describe the complex structure of real-world networks in diverse fields of knowledge. Studying their behaviour and fitting properties are still critical challenges that, in general, require model-specific techniques. An important line of research is to develop generic methods able to fit and select the best model among a collection. Approaches based on spectral density (i.e. distribution of the graph adjacency matrix eigenvalues) appeal to that purpose: they apply to different random graph models. Also, they can benefit from the theoretical background of random matrix theory. This work investigates the convergence properties of model fitting procedures based on the graph spectral density and the corresponding cumulative distribution function. We also review the convergence of the spectral density for the most widely used random graph models. Moreover, we explore through simulations the limits of these graph spectral density convergence results, particularly in the case of the block model, where only partial results have been established.

- Clotilde Melot (I2M, CMI, Université Aix Marseille)

Intertwinning wavelets or multiresolution analysis on graphs through random forests

Several methods are available to analyze signals on graphs, i.e functions defined on the vertices of a finite connected weighted graph. Fourier analysis requires the computation of the eigenvalues and eigenvectors of the graph Lapla- cian, it is also a non-local transformation. In this communication we propose a multiresolution scheme which provides well localized basis functions without requiring spectral computations. The approach relies on probabilistic tools: a random spanning forest to downsample the set of vertices, and approximate so- lutions of Markov intertwining relation to provide a subgraph structure and a filterbank which is a basis of the set of functions. As a by-product, the method provides a graph coarse- graining procedure. We illustrate the method by nu- merical experiments computed with the Python Package IntertwiningWavelet developped by Dominique Benielli (Labex Archimède, Université Aix-Marseille) to process the method. (details)

Call for participation

The call for participation is now closed.

Program

- 8h55 - Introduction

- 9h00 - Keynote talk: Catherine Matias (CNRS, LPSM). Spectral density of random graphs: convergence properties and application in model fitting Slides

- 9h45 - Cedric Vincent Cuaz (INRIA Sophia Antipolis). Semi-relaxed Gromov-Wasserstein divergence with applications on graphs

- 10h15 - Coffee break

- 10h30 - Keynote talk: Clotilde Melot (I2M, CMI, Université Aix Marseille). Intertwinning wavelets or multiresolution analysis on graphs through random forests

- 11h15 - Simon Coste (ENS). Spectral algorithms for directed sparse graphs

- 11h45 - Clément Vignac (EPFL). Top-n: Equivariant Set and Graph Generation without Exchangeability Slides

- 12h15 - Lunch

- 14h00 - Keynote talk: Pierre Borgnat (CNRS, ENS Lyon). Representation Learning for Attributed Graphs Slides

- 14h45 - Poster session & coffee break

- 16h00 - Keynote talk: Johannes Lutzeyer (LIX). Graph Shift Operators and Their Relevance to Graph Neural Networks Slides

- 16h45 - Cristian Bodnar (University of Cambridge). Neural Sheaf Diffusion: A Topological Perspective on Heterophily and Oversmoothing in GNNs

- 17h15 - Patty Coupeau (Université d’Angers). On the relevance of edge-conditioned convolution for GNN-based semantic image segmentation using spatial relationships Slides

Poster session

- Amitoz Azad (University of Caen). Learning Label Initialization for Time-Dependent Harmonic Extension

- Alexis Blandin (IRISA). Représentation de newsletters en graphes hétérogènes : modélisation et bénéfices

- Lorenzo Dall Amico (ISI, GIPSA-lab). Spectral Methods For Graph Clustering Poster

- Jhony H. Giraldo (La Rochelle Université). Weakly-Supervised Semantic Segmentation via Hypergraph Convolutional Networks

- Mikhail Kamalov (INRIA Sophia Antipolis). Graph diffusion & PCA framework for semi-supervised learning

- Yusuf Yigit Pilavci (GIPSA-lab). Variance reduction in stochastic methods for large-scale regularised least-squares problems

- Harry Sevi (Université Paris Saclay). Generalized Spectral Clustering for Directed and Undirected Graphs

- Slimane Thabet (Pasqal, LIP6). Quantum evolution kernel : Machine learning on graphs with programmable arrays of qubits

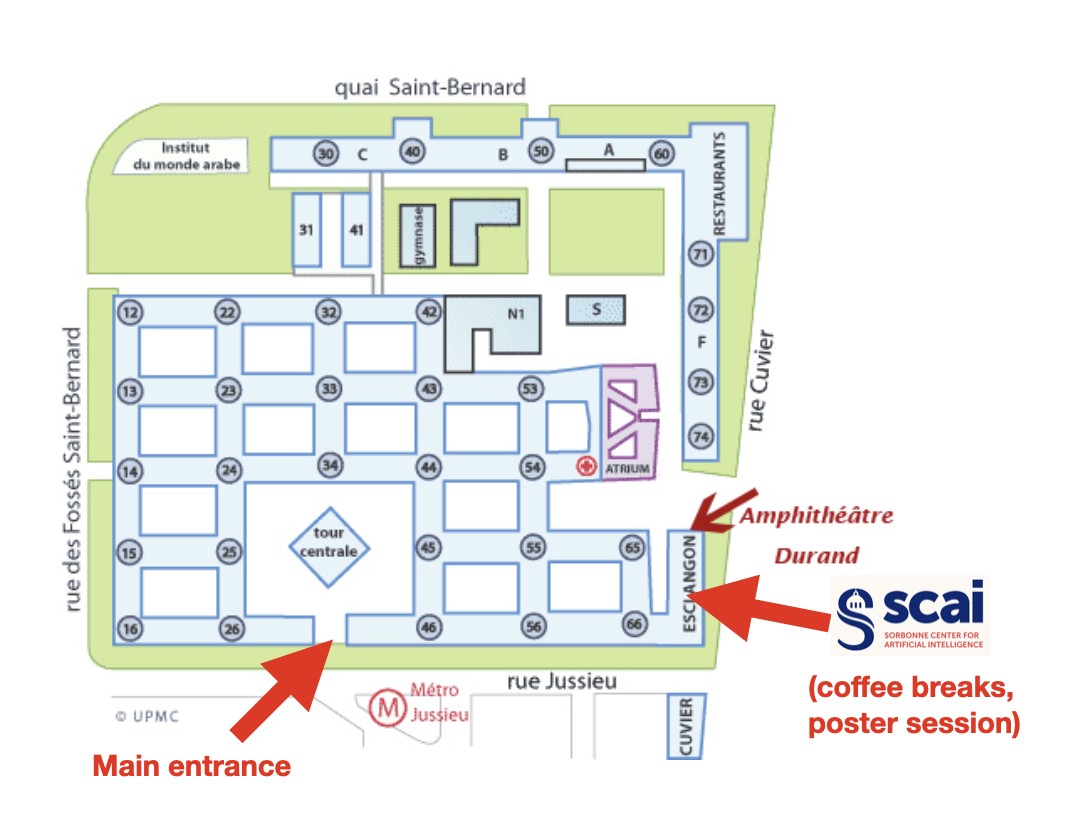

Map

Organizers

- Edouard Oyallon (CNRS, ISIR) edouard.oyallon@cnrs.fr

- Nicolas Keriven (CNRS, GIPSA-lab) nicolas.keriven@cnrs.fr